BASIC Memories

Two television shows from my childhood I thoroughly cherished were Mr. Wizard's World on Nickelodeon and MacGyver. In the former, Don Herbert as Mr. Wizard revealed how things found in any household can be assembled into devices that demonstrate scientific principles. In the latter, Richard Dean Anderson as MacGyver escaped seemingly impossible situations by improvising ingenious inventions out of whatever items were at hand. Both presented how to craft a rudimentary recordplayer out of a sowing needle, paper, and a pencil:

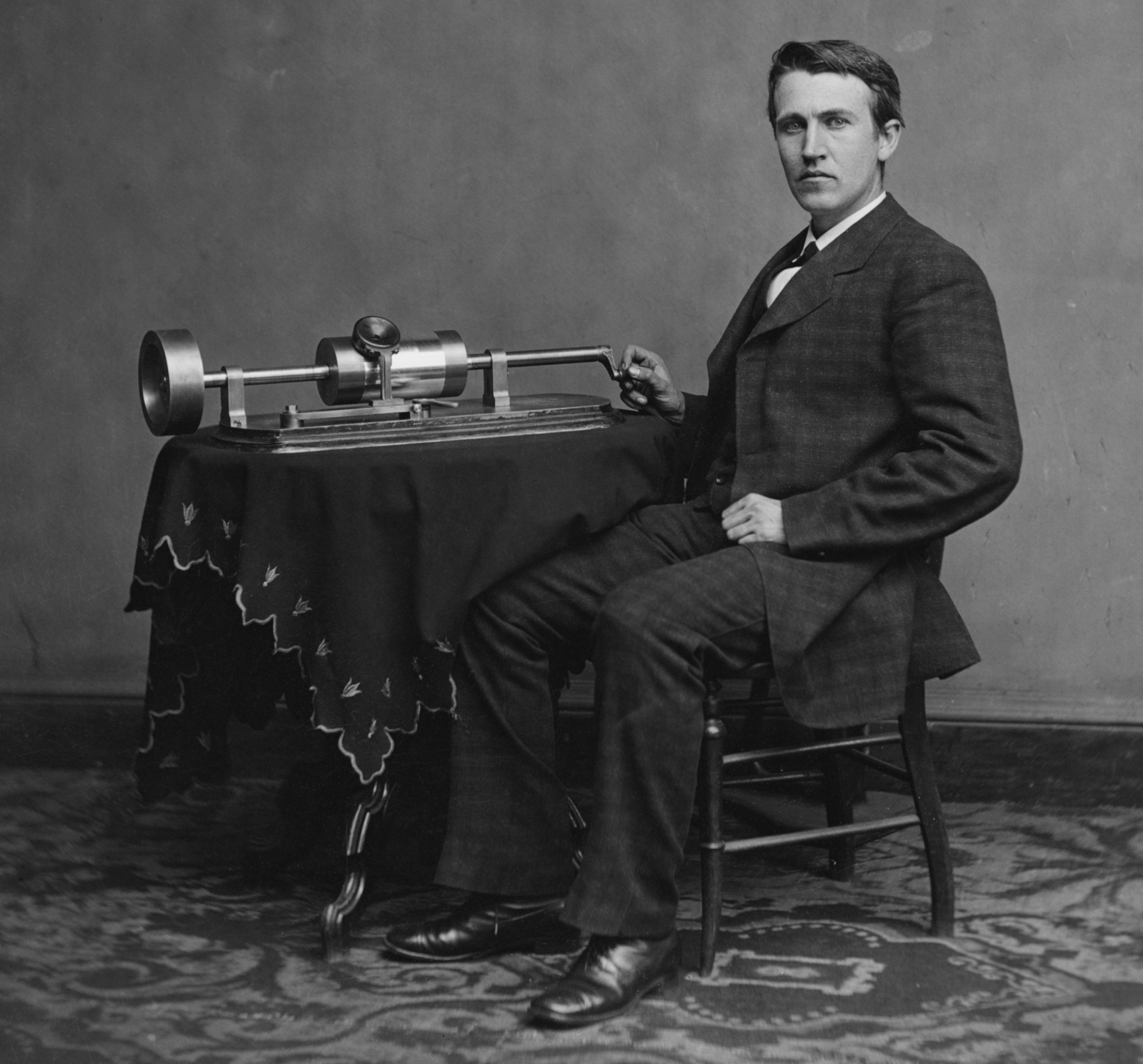

They inspired me to investigate Thomas Alva Edison, the inventor of the phonograph. Before the world wide web, that meant visiting my local library, where I discovered Edison’s earliest records were made of tinfoil wrapped around a cylinder. And due to the tinfoil’s malleability, his invention not only reproduced sound, it could record it.

As I perused the library, I came across books about other inventors. One detailed how Alexander Graham Bell employed electricity to transmit sound using a phonograph-like device that substituted the needle for a coil of wire and a permanent magnet:

Another explained how John Logie Baird televised moving images using a spinning cardboard disc cut from a hat box:

Those inventions seemed no more complex than the ones I saw on those television programs. So, I embarked on a mission to replicate them. Regrettably, being just a young child, none of my attempts panned out.

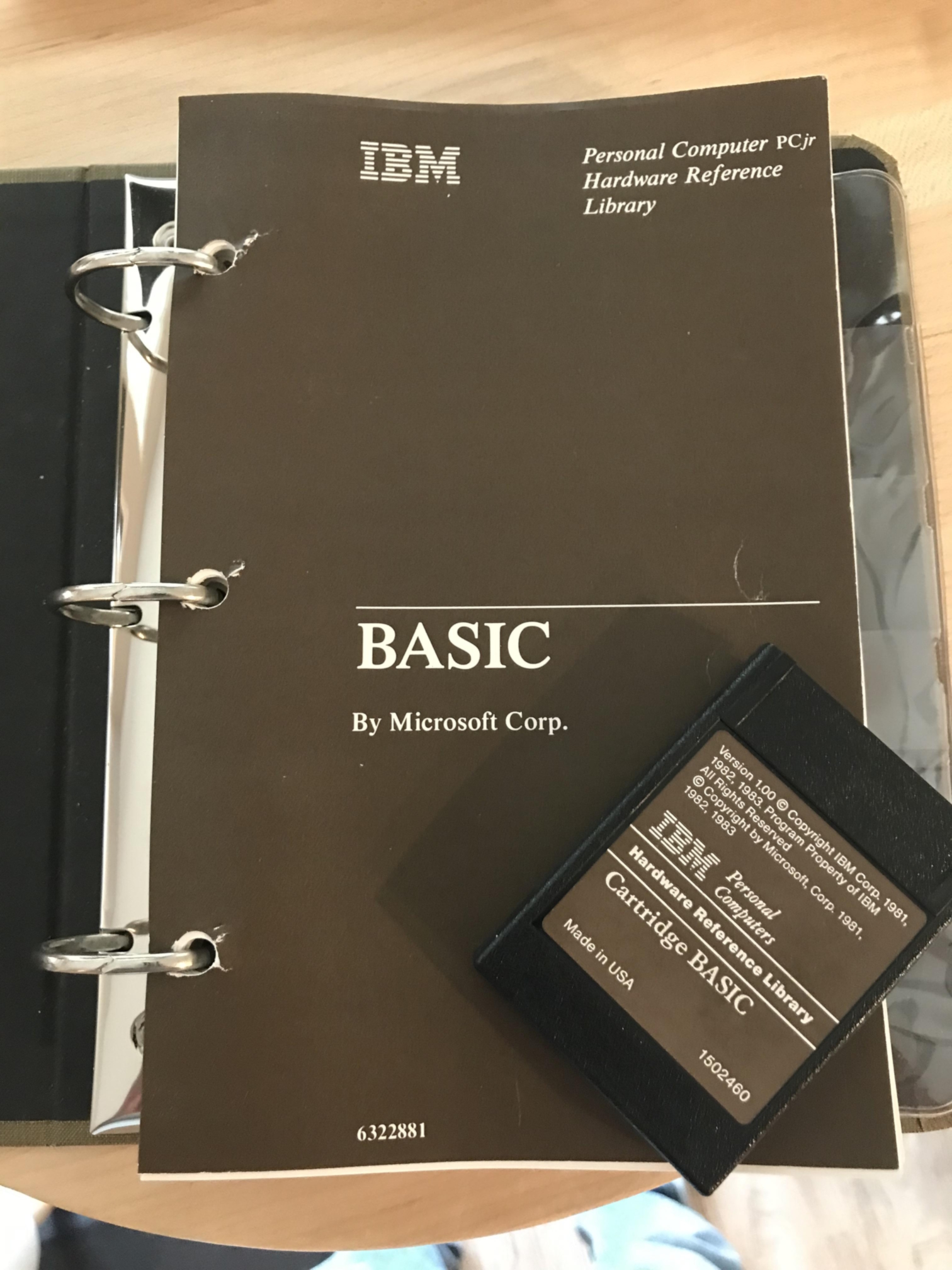

But in 1985, when I was in second grade, my family acquired an IBM PCjr, a stripped-down IBM PC with marginally enhanced graphics and sound for games:

It came equipped with an approachable and forgiving medium I could manipulate to invent things that really worked: the BASIC programming language.

Unlike physical inventions, I didn’t have to wait until all the components were meticulously crafted and assembled to see if they functioned. Instead, the plainly named “Cartridge BASIC” provided an interactive environment that delivered instant feedback. In one of my first forays, I instructed the computer to draw a circle. It did, but not where I wanted it. However, with just a few taps on the keyboard, I made it move. The next step was obvious: I got the circle bouncing around the screen. In mere minutes of tinkering, I was already halfway to a Pong clone.

That process of incrementally breathing life into a game filled me with an exhilarating sense of accomplishment. It was so satisfying. I couldn't wait to explore further.

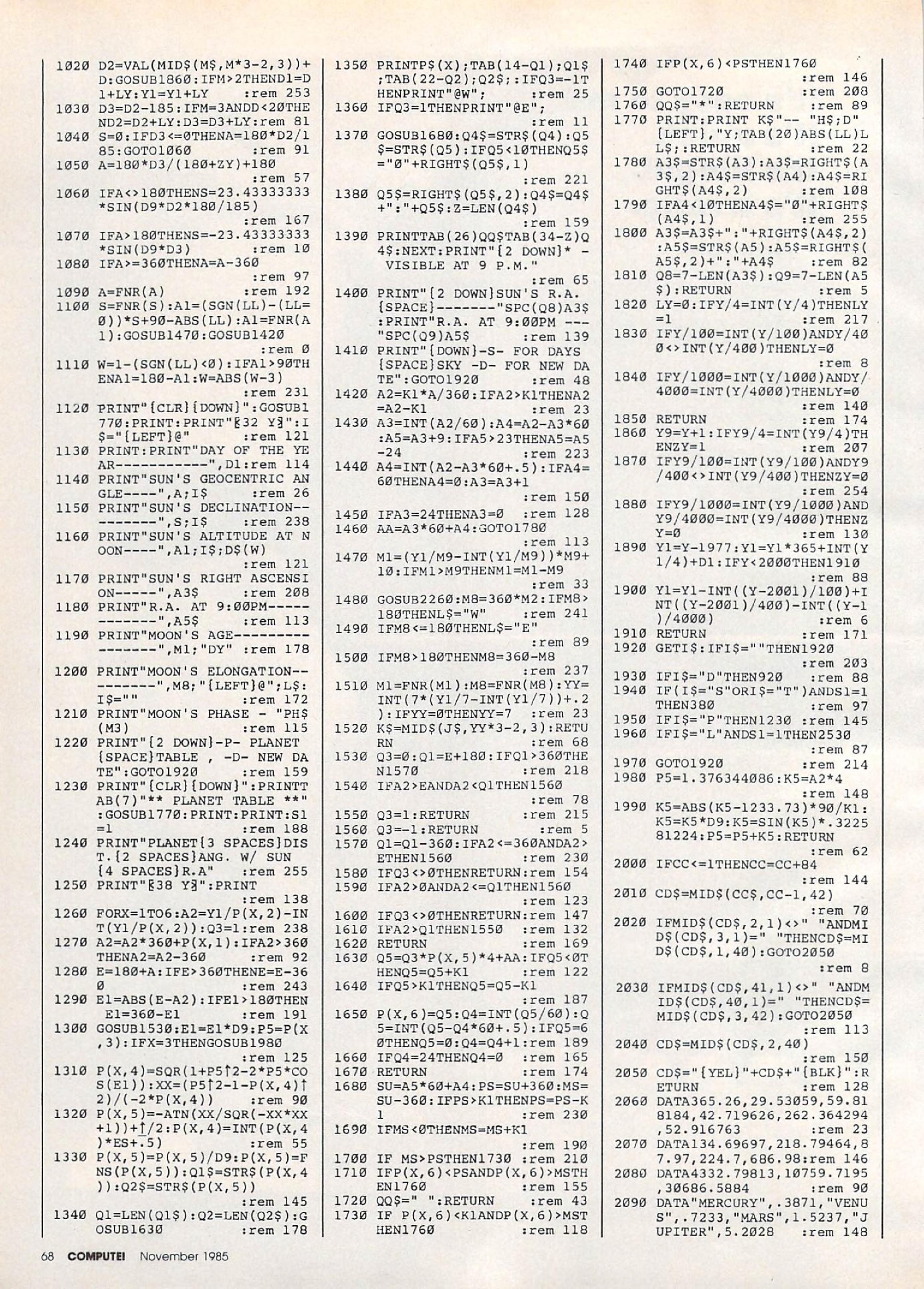

Upon returning to the library, I unearthed plenty of books and magazines brimming with type-in BASIC listings. Many were tailored for young learners, such as the classic BASIC Computer Games, a relic of 1973:

I felt like I stumbled upon ancient hieroglyphic scrolls:

What secrets lay concealed within those lines? What would be revealed by deciphering them? I had to know.

Meticulously keying in thousands of symbols was grueling. But the process built up a sense of excitement and anticipation. I knew I’d be rewarded when I finally typed RUN. Though, more often than not, all my hard work yielded nothing more than a heaping pile of syntax errors, not just due to typos, but because of BASIC dialect incompatibilities.

If the code contained proprietary instructions, like those enigmatic PEEK and POKE commands, then it was nearly impossible to adapt. But if such code was written specifically for the PCjr, then the hardware masters who crafted those magical instructions provided a key to unlocking features not found in the manual. And the details didn’t matter because I could just copy and paste the instructions into my own projects.

On a side note, all true BASIC adventurers eventually attempt to realize the infinite monkey theorem by writing a program that pokes random values to random addresses. Given enough time, what kind of extremely improbable events would such a program manifest? Could it create artificial intelligence? A self-aware machine? Nope. The outcome is usually less than spectacular: random characters and colors flash on screen, the disk drive activity light flickers, the speaker emits some beeps, and then the computer invariably crashes.

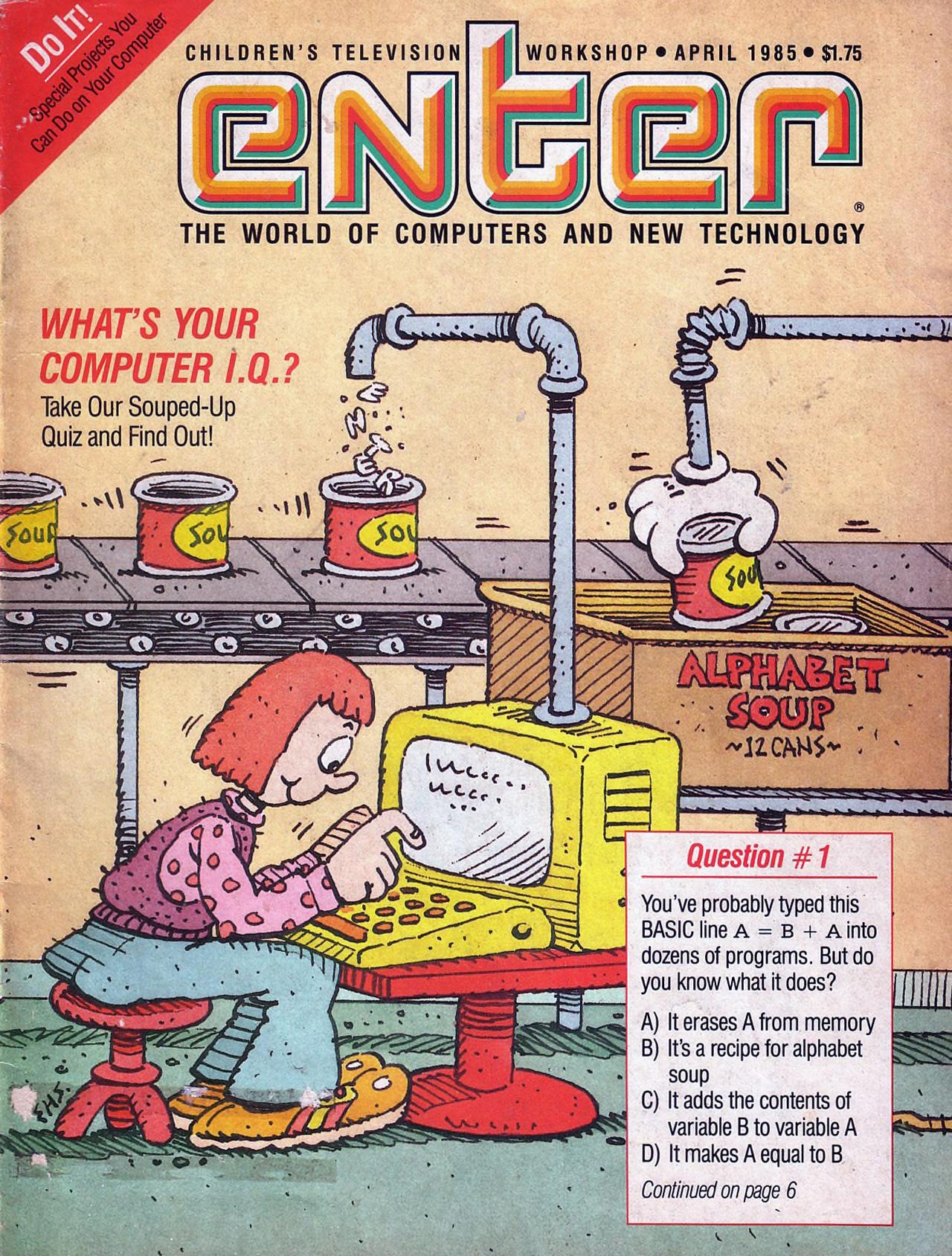

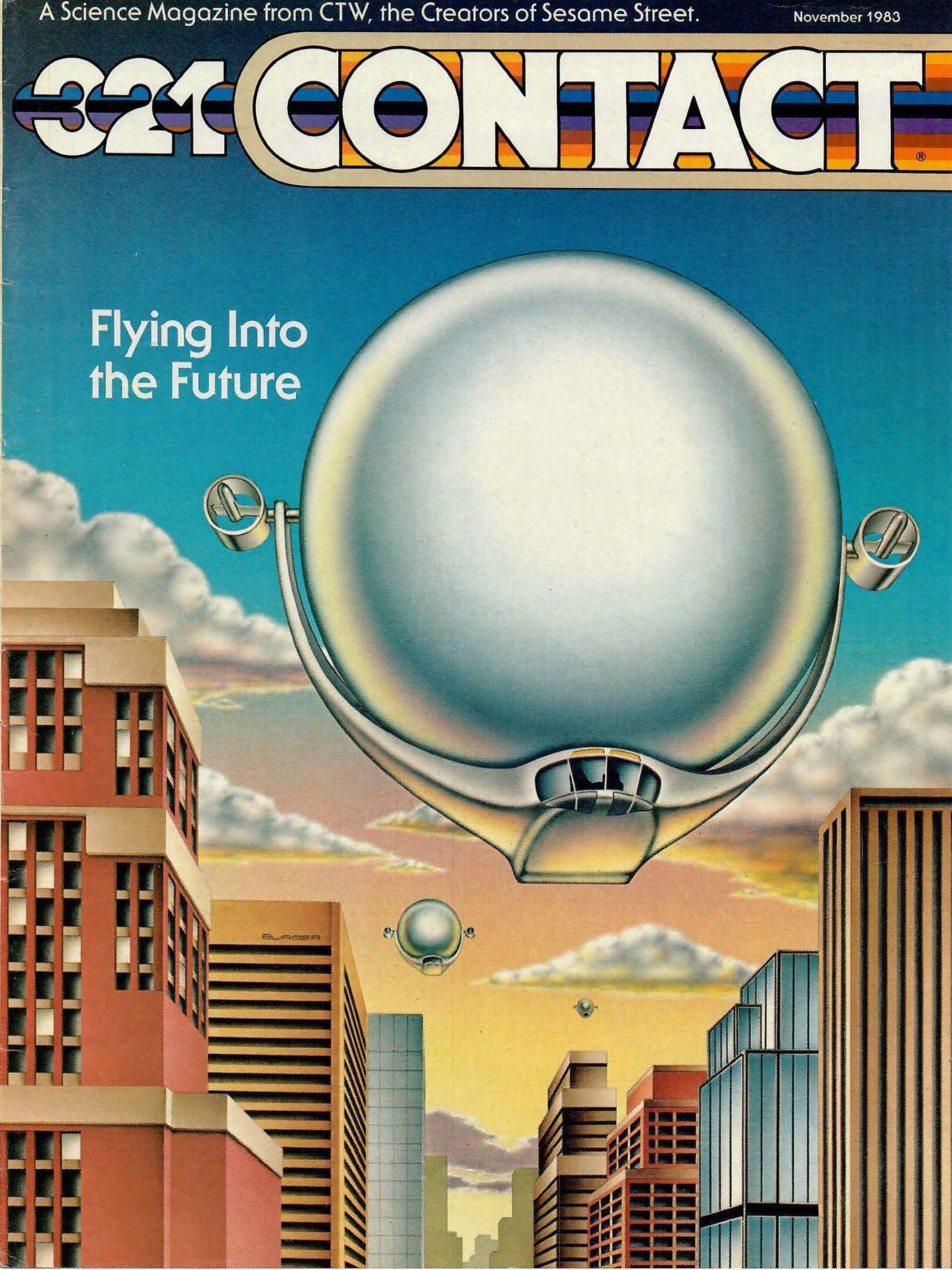

I obtained subscriptions to Enter and 3-2-1 Contact, magazines aimed at school-age children that showcased BASIC games contributed by their readers:

As an incentive, they offered a reward of $25 for submitting a program deemed worthy of publication:

I created a few games I intended to send in, but sadly I never did. Nonetheless, studying the listings in those magazines made me feel like I was part of a community of digital explorers sharing discoveries.

At that time, arcade cabinets were ubiquitous. I played them in 7-Eleven, restaurants, the roller rink, ShowBiz Pizza Place, and even the corner mom-and-pop deli. I understood the PCjr was nowhere near as powerful as those machines. Nonetheless, I made valiant attempts to port what I saw in the arcades to BASIC. The far from perfect results were at best crude approximations. But the process of creating them was undeniably enjoyable.

There was one place I saw much better approximations of arcade games: the kids who lived across the street owned an Atari 2600:

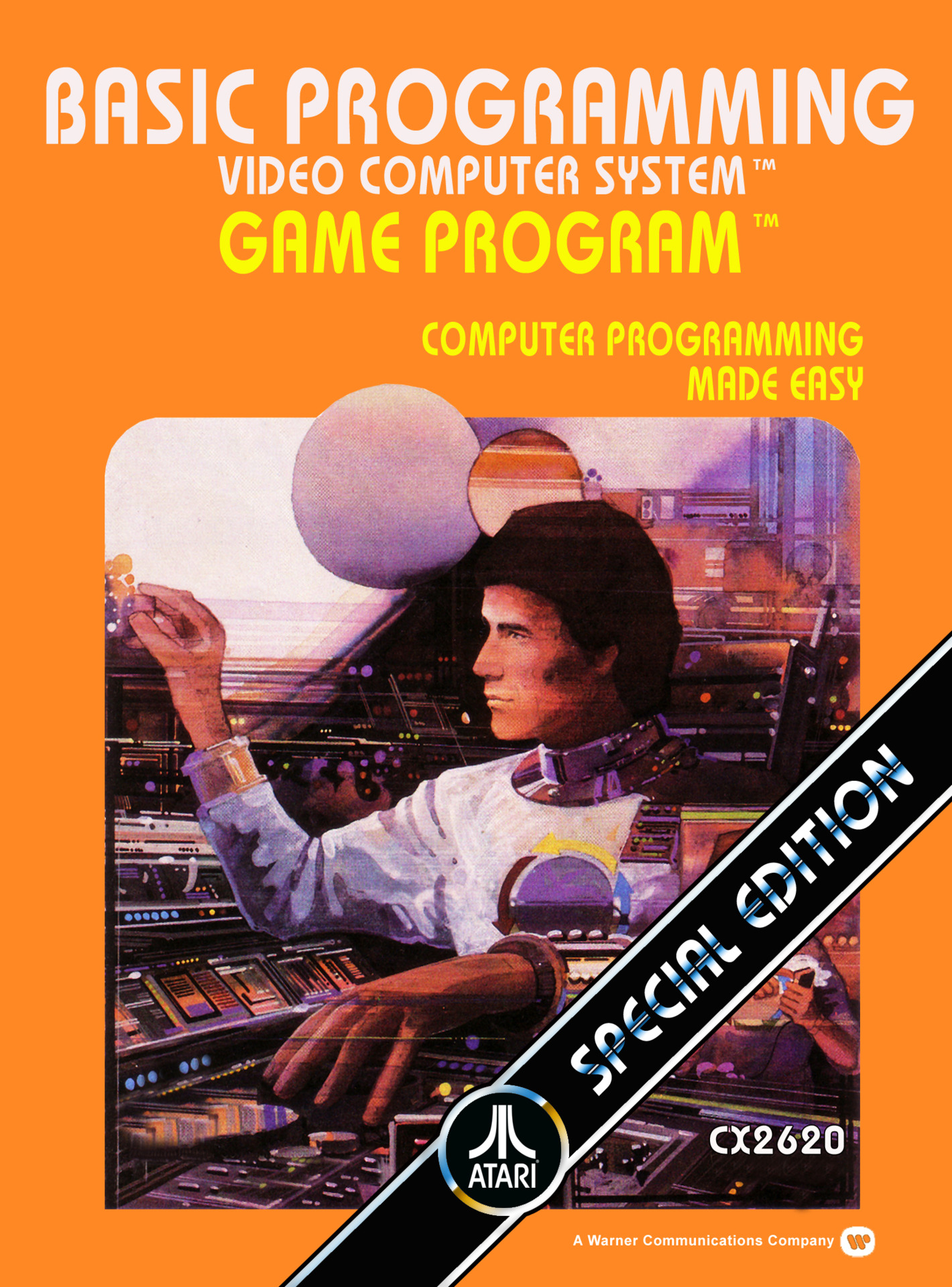

When I heard they were selling it to buy some new-fangled console from Japan called Nintendo—whatever that was—I knew I had to acquire it. Sure, I wanted to play Atari games. But I was far more interested in one particular cartridge:

Upon exchanging every last penny in my piggybank for the system, I gazed deeply into the Atari 2600 BASIC Programming box cover. My imagination took flight. I was transported into a futuristic universe, an amalgamation of Star Trek and 2001: A Space Odessey. My mind raced with visions of what it would be like to develop BASIC programs on a platform engineered for gaming. That cartridge was the means to express my boundless creative energy. I had the know-how, and now the technology. With that canvas to craft digital wonders, I’d finally be able to transform my dreams into interactive realities.

When I inserted the cartridge, attached the keyboard peripheral to both input jacks, and powered up the system, I discovered that due to the severe memory limitations of the Atari 2600, the development framework I held in such high regard was constrained to just nine lines of BASIC code. My excitement withered into disillusionment. There was no space to accommodate the ambitious projects I envisioned.

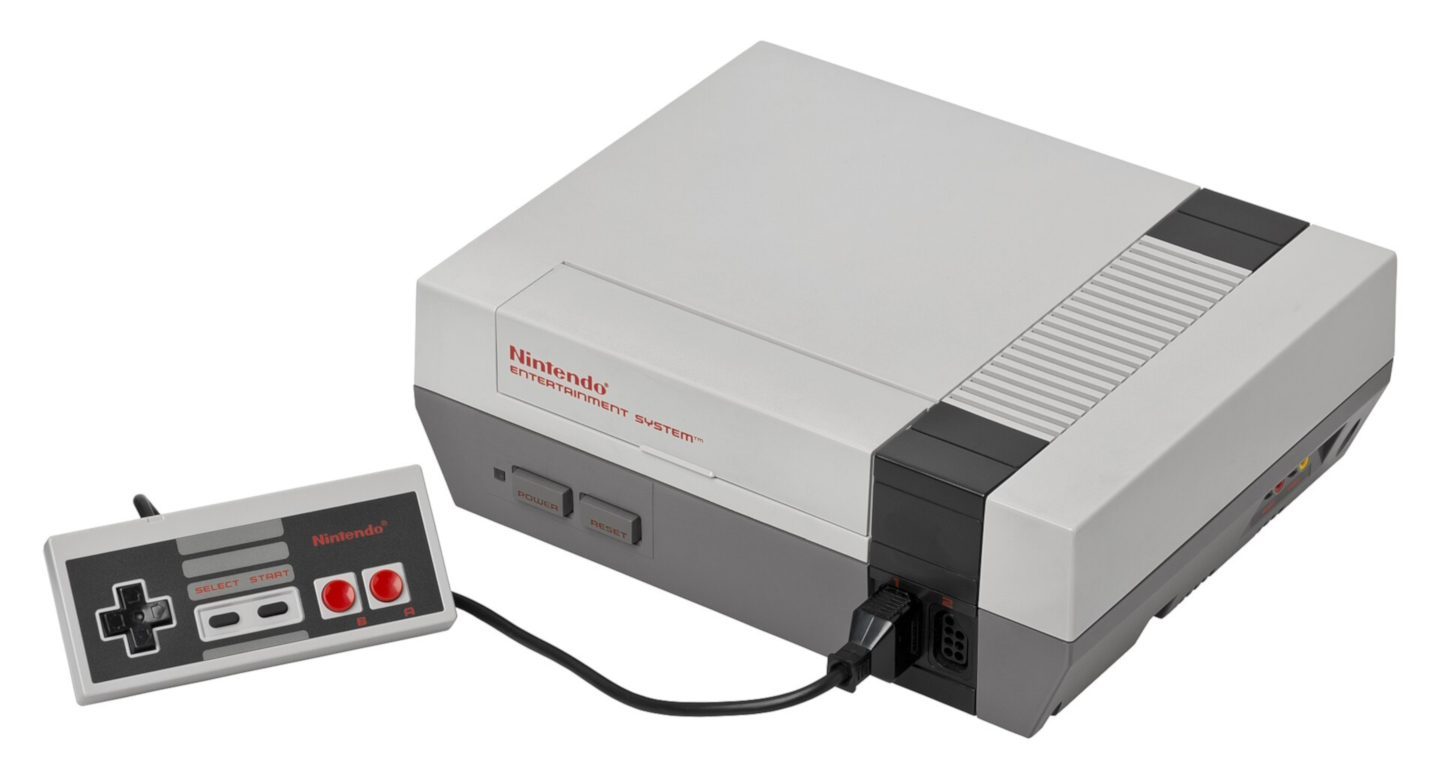

I felt like I’d been robbed. That feeling was subsequently amplified tenfold when I learned about the vastly superior Nintendo Entertainment System acquired by the kids across the street that was partly funded by—yet not owned by—me.

Nevertheless, the experience offered a valuable lesson in the importance of managing one's expectations and the realities of marketing tactics. It also taught me what I now know as the age-old adage, “Let the buyer beware.”

As Nintendo swept across America, tables covered with Atari 2600 cartridges selling for mere pennies became a common sight at retail stores and garage sales. Before long, I amassed a substantial collection. And I had a lot of fun with them. They kept me determined to create my own games for the system. But how?

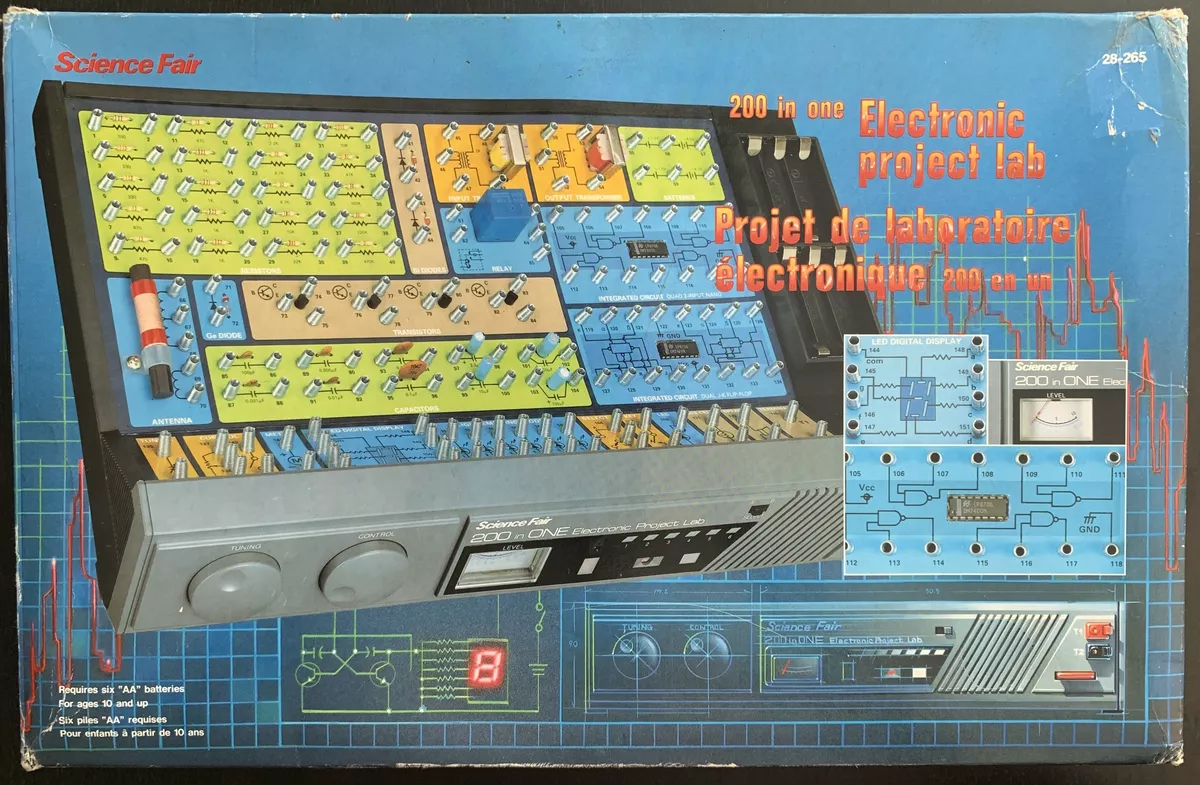

I owned a few RadioShack electronics learning kits, like the 200 in One Electronic Project Lab:

The kits enabled users to assemble projects with a system reminiscent of paint-by-numbers. While its manual didn’t deep dive into circuit theory, it conveyed broad concepts, and it explained the elemental components: resistors, capacitors, inductors, transformers, relays, switches, LEDs, diodes, transistors, etc.

Exploring those kits made me wonder what was inside an Atari 2600 cartridge. I speculated that the game's instructions must be represented as sequences of electronic components. That made me contemplate the possibility of decoding the sequences and creating my own games by wiring up custom carts. Before the web, the only means to test my hypothesis was dissection. A quick inspection of a plastic shell revealed an Atari 2600 cartridge isn’t meant to be opened. It doesn’t have any screws. Despite that, I determined a screwdriver was the right tool for the job. I picked my least favorite game, and I spent a good five minutes prying it open. I was astonished by what I found inside:

I instantly recognized what that was: an integrated circuit, thousands of components miniaturized onto a silicon chip and packaged into a little black rectangle. I wasn’t going to be able to replicate that. The realization left me disheartened. But I recovered as I returned to programming the PCjr.

Meanwhile, in elementary school, principles I learned from BASIC appeared to align with what was being taught. For instance, consider this question from a first-grade math worksheet:

The blank worked like a variable, and the equals sign like the assignment operator, or so I thought. But in fourth grade, blanks were given names, and the true purpose of the equals sign was revealed. For example, in the following expression, solve for x.

The sudden shift from equals meaning “assign the blank to the answer” to “both sides have the same value” undoubtedly broke many student’s brains. But for me, the concept wasn’t new. I understood the equals sign played different roles depending on the context. I had already written similar expressions in if-statements.

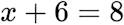

Interestingly, to sidestep such confusion, Atari 2600 BASIC employs the left-arrow assignment symbol from mathematics instead of the conventional equals sign:

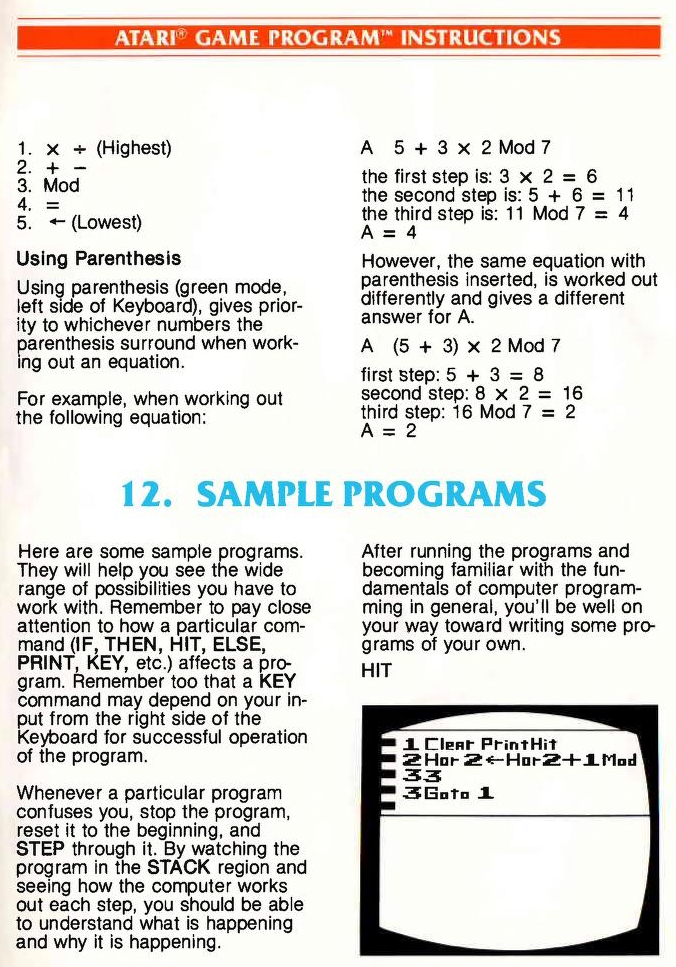

I was a very lazy student. In fifth grade, we were taught long division. Recall that when a four-function calculator performs division, it displays a decimal value, not a remainder. The teacher emphasized this fact to convey there were no sneaky ways to evade the impending laborious long division exercises he was about to assign. However, I remembered seeing an operator in the PCjr Cartridge BASIC manual called MOD:

So, I cheated. Unfortunately, I got the assignment returned with a message from the teacher written in large red letters across the top that read:

REDO: Show your work!

Challenge accepted: I decided to create a program that printed all the intermediate steps. It took me about two weeks, during which I was forced to manually complete those repetitive assignments. Alas, by the time I was done, our class moved onto other topics.

While I was never able to apply my homework-cheating software, creating it led me to realize the class was taught how to do long division, but not why the method works. My program exposed what we were doing with paper-and-pencil was manually executing an algorithm that breaks the larger problem down into smaller steps simple enough to solve directly. It made me appreciate one of the most remarkable features of algorithms: they empower problem-solving even without knowledge of their theoretical basis.

I hated school for all the usual reasons. But one person made showing up worthwhile: my seventh-grade math teacher. His classes were a mix of learning, entertainment, and motivation. He constantly experimented with different ways to keep everyone engaged. For instance, he hosted game-show-style competitions, where pairs of students raced to solve math problems on the blackboard. He also had an inherent wit, which he embraced to weave jokes and short stories in between lessons. And like a coach rallying a sports team, he often delivered encouraging speeches that instilled confidence and perseverance.

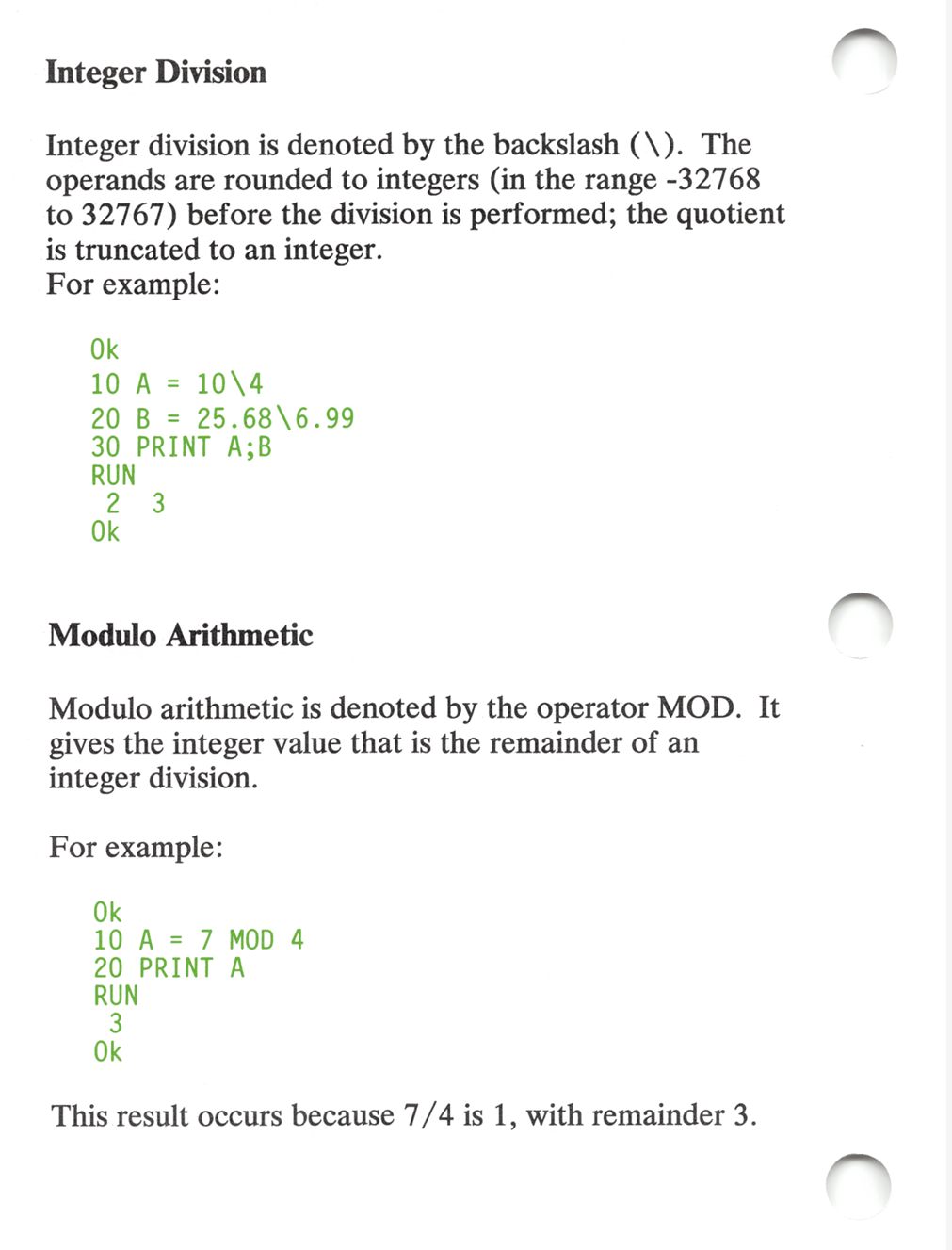

Best of all, once every two weeks he wheeled three carts into the back of his classroom, each carrying an Apple II, which he used to teach a subject I was well versed in: BASIC programming. Each lesson started on the blackboard, where he would explain how to translate a recently discussed math topic into BASIC code. Then, since there were only three machines—the school’s entire computational resources, by the way—he partitioned the class into teams and constantly rotated who was at the keyboard until each team completed the assignment.

Toward the end of the school year, he announced his “Ten Challenges”, a set of programming problems something like “Compute the first 100 primes”, “Print Pascal’s triangle”, “Find the greatest common divisor”, and so on. Each was worth extra credit, and he would ensure the three computers would from then on be available in the study hall classroom for those willing to tackle them.

The announcement ignited a fire within me. I felt like I’d been training for the past five years just for his challenges. I needed to immerse myself, to explore, analyze, and conquer them. Study hall couldn’t come soon enough. While I waited, I mentally prepared myself to race to one of the machines and fend off my competitors. But when the time came, I found myself alone. I was the only one interested, not just in the challenges, but programming in general. Everyone else preferred to use the free period to goof off.

Over the next week or so, I completed all ten challenges, some on my PCjr. I triumphantly strolled into his classroom on a Monday morning with a bundle of tractor feed printouts, eagerly anticipating the smile on his face when he saw how fast I vanquished them. But something was wrong. It was a BASIC programming lesson day, yet the three computers were not in the back of the classroom. More notably, our teacher was missing. After several confusing minutes, the school principal walked in with someone I didn’t know. The principal explained our teacher had been replaced, and he proceeded to introduce the new guy.

After a day of bewilderment, I received news of what happened: my favorite teacher was arrested at his home and charged with multiple acts of aggravated sexual assault for allegedly touching a 13-year-old student! I never saw him again.

His replacement didn't know anything about computers. There would be no more BASIC lessons and no recipient of my solutions to the Ten Challenges. For the rest of seventh grade and the entirety of eighth grade, the three Apple II’s languished in the back of the study hall classroom. Sometimes I used them to pass the time by writing short programs.

To dispel the boredom of one of my last study halls, I rearranged the keys on a keyboard. I should have quit, but I foolishly pried off the spacebar and the reset button. And much to my horror, I couldn’t get them back on. Unlike a modern PC, an Apple II keyboard is integrated into the case. It can’t be swapped for a new one. With images of detention and a large repair bill racing through my mind, I quietly tiptoed away from the broken machine hoping no one noticed who did it.

Later that day, during social studies class, the school’s guidance counselor and the new math teacher appeared in the doorway. They were scanning the room. Suddenly, the math teacher pointed right at me. I froze and attempted to look innocent. But I was betrayed by my forehead, which started spewing beads of sweat. Then they turned and left. I crumbled into my seat, relieved. Minutes passed. The incident faded from my consciousness. Then out of nowhere, an announcement blasted over the classroom’s PA system. It ordered me to the guidance counselor’s office! From the pit of my stomach, a foreboding sense of impending doom welled. I mustered the strength to rise. And I slumped my way out.

Upon entering her office, the guidance counselor stated she hunted down all the eighth-grade computer nerds. Unsurprisingly, that meant just me. However, I wasn’t there because of a broken keyboard. Instead, she described a new and wonderous science and technology high school with computers in every classroom, engineering and research labs, state-of-the-art high-end graphics workstations, professional video editing equipment, a teleconferencing room, a virtual reality system, an industrial 3D printer, and on and on. Plus, they would equip each student with a modern computer in their home. Nowadays, magnet high schools are all the rage. But back in 1992, this was unheard of.

The school was searching for fifty students for its inaugural class. Meaning, if I went there, not only would I be surrounded by like-minded individuals, but I also wouldn’t have to deal with the social bullshit of the high school pecking order. I would effectively have senior status for all four years.

I felt like I was being offered a golden ticket to Willy Wonka's Chocolate Factory. I was so elated that instead of merely walking back to social studies class, I joyfully skipped down the hallway. And when I entered the classroom, I didn't stop. I skipped a full lap around the perimeter before settling into my seat. After some giggles, the room fell silent. All eyes were fixed on me. All mouths were agape. Then I simply tossed the teacher an ear-to-ear smile. Apparently, he accepted I was on cloud nine because he just smiled back and carried on with his lecture.

But getting into that school wouldn't be that easy. The admissions process required middle school transcripts, an SAT-like exam, an essay, an interview, and even a Rorschach-style inkblot test. And I was competing against the brightest students from across the entire county. Yet I walked in there completely unprepared, and despite the daunting challenge, I got accepted.

How did I do it? Was I naturally adept at standardized tests, effortlessly acing them? Was I a linguistic prodigy capable of composing an essay that leaves a lasting impact on the heart and mind of the reader? Did the psychoanalytic results of my inkblot responses unlock the gateway to the opportunity?

The enigmatic key to my acceptance was revealed in my senior year. By then, the original class of fifty students had dwindled down to forty as those who couldn’t keep up dropped out. We were at a college prep retreat, where one lecturer explained the value of conducting interviews, even for candidates with weak high school grades, low SAT scores, and a subpar entrance essay. He said that on extremely rare occasions, applicants’ words can be so compelling they persuade the interviewer to take a chance and allow them in.

Later that day, during a dinner attended by our entire class, our teachers, and the lecturers, one of my fellow students asked my chemistry teacher, who was also the head of our high school’s admissions committee, if an interview really held that power. My chemistry teacher said, “Yes. In fact, it happened, exactly once. There was one candidate who submitted an average middle school transcript, who failed the entrance exam, who wrote a lackluster essay, but won us over in the interview. And that person was...” And he named me! I was shocked. In retrospect, I should have taken that as a complement. But in that moment, it felt like he told the entire class I didn't deserve to be there. I reflexively shouted, “Fuck you, professor!” Beside me, a friend gazed in astonishment, his eyes wide and jaw dropped. Yet no one else seemed to notice my outburst. Both of us exchanged baffled looks, surprised by the lack of repercussions. The dinner conversation simply moved onto other topics, as if nothing had happened.

It remains a mystery why the reaction to my regrettably harsh vocalization was localized to the person seated next to me, but I do know what transpired during my interview. My interview was conducted by my future physics and humanities teachers. They began with some easy ice-breaker questions like, “Why do you want to go to our school?” But I fumbled my responses, not due to nerves, but inexperience. After a few awkward minutes, I could sense they were already set to dismiss me. Then my future humanities teacher asked, “Who do you consider your hero?” I proudly said, “Thomas Edison.” I swear I saw her eyes roll. Then she posed a challenging question: “If Thomas Edison was so smart, why didn’t he invent the computer?” Thomas Edison really was my hero. Her question felt like an insult to him, and by extension, to me. Something snapped inside. I retorted:

“Edison is well-known for inventing the light bulb. But what you may not know, is that while he was investigating why bulbs burn out, he found he could get current to flow in the vacuum between the filament and a positively charged plate inserted inside the bulb. This led to the discovery of the thermionic emission, the basis of vacuum tubes, those things that powered the first generation of computers. It’s also the principle behind the cathode-ray tube that displays a picture inside a computer monitor. And in early computers, they served as data storage devices, memory.

“Edison’s first financial success was the stock ticker. He created a system that transmitted digital information from a keyboard to a remote printer. And it was just one of several of his inventions that advanced telegraphy. His work eventually led to today’s telecommunication and computer networks.

“Edison invented the phonograph, the first data storage medium. We have floppy disks today because he created records back then. On the topic of audio, he created the carbon telephone transmitter, what we now call a microphone. He also invented the movie camera. All the multimedia you see on modern computers originated from his pioneering contributions.

“Edison developed the alkaline storage battery, a much lighter battery than the lead-acid batteries that came before it. It was a step toward even lighter batteries that we find in laptops today...”

I continued to link all of Edison’s work to computers, no matter how much a stretch. When I was done, my interviewers looked stunned, but impressed. From there, my interview evolved into a discussion. I told them about all my failed attempts to recreate Edison’s inventions and those of other inventors. I explained how I found solace in BASIC programming, a medium where I could build things that really worked. And I said if I went to their school, perhaps I’d finally gain the engineering skills to be a real inventor. With those words, they decided to take a chance on me.

Over the course of my high school years, I participated in numerous engineering projects. In a memorable one, I collaborated with a team of my peers in a product design competition. Owing to either poor scheduling or procrastination, we ended up pulling an all-nighter before the deadline. And since the product depended on circuitry for which we lacked the expertise, we roped in our electronics professor. He quickly informed us that the requirements were too complex to build the circuits in a night. That is, unless we used a microcontroller.

Before the Raspberry Pi, before the Arduino, there was the BASIC Stamp, released just that year:

Our professor explained how it could do the job. But he warned us it was unlikely we could learn how to program it and write the code before morning. However, in a moment reminiscent of an iconic scene from Jurassic Park, I glanced at the manual and confidently declared, “It’s a BASIC system! I know this!”

Tapping into my long history with BASIC, I banged out the program in under an hour. Incredulous, our electronics professor scrutinized my code, searching for flaws, while interrogating me. Once it was clear that my code met all the requirements, he remarked to everyone, “It’s scary how fast you wrote that.” That comment, much to my amusement, became a recurring refrain throughout the night.

We arrived at the contest in the morning, feeling utterly drained, and surveyed our competitors’ projects. They were assembled from cardboard, Legos, and popsicle sticks. In contrast, our project, with its 3D printed components, looked like a genuine piece of consumer electronics picked up from a big box store. That made us think we’d clinch the win. Unfortunately, when the judges came around, we were so sleepy we failed to attach the power cables. The demo was a disaster. Nothing worked. We returned home empty-handed. But we learned presenting an idea was just as important as all the effort that made it a reality.

Back at home, now equipped with a more powerful DOS PC, I was an avid user of Prodigy, a pre-web dialup service with a browser-like interface:

I primarily hung out in the programming message board, a community where users exchanged advice on crafting QBasic games, among other programming topics. Frustratingly, the Prodigy message boards allowed only text posts, and messages were capped at 2,000 characters. Though they did provide the ability to import and export messages, making it possible to write longer messages offline, if you were willing to partition them. That gave me an idea.

I wrote a QBasic program that could split a binary file into message-sized text files by encoding each byte into a pair of hexadecimal digits. My program was also able to merge such text files back into the original binary file. Shortly after posting it, the programming message board devolved into a platform for pirating commercial games and sharing pornographic imagery, with many uploads spanning hundreds of messages. A savvy user suggested I should have employed Base64 encoding to shrink the output, explaining the concept with a program written in the C language. “C? What was that?” I wondered. However, before I could figure it out, the message board moderators spoiled the fun by deleting the posts.

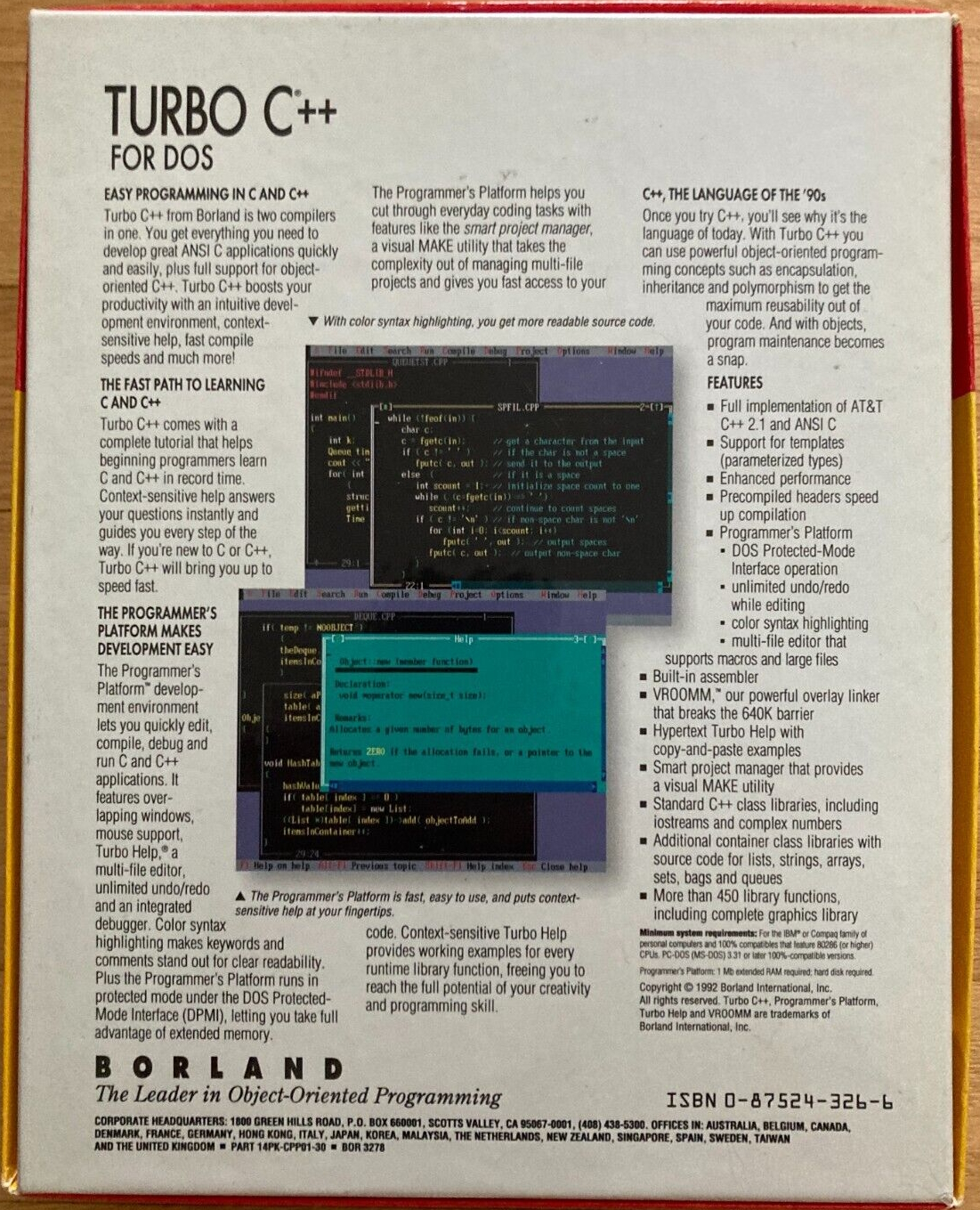

The legacy of BASIC endures in various forms to the present day. But my personal journey with BASIC ended in the summer of 1993, before my sophomore year of high school. During that time, 3-2-1 Contact magazine stopped featuring BASIC programs, prompting me to cancel my subscription. More significantly, I used the recess to investigate that mysterious C language. With claims of superior performance and capabilities, it sounded like an excellent platform for game development. By happenstance, on a trip to the mall, I discovered Borland Turbo C++ 3.1 for DOS on a shelf in Babbage’s, the chain store now known as GameStop, when they sold more than just videogames. I promptly snatched it up.

On the ride home, I marveled at the screenshots on the back of the box. They evoked that same sense of wonder and excitement I experienced many years prior when I gazed into the cover art of Atari 2600 BASIC Programming.

But unlike that lousy Atari cart, Turbo C++ fully lived up to my expectations. It was the real deal. I left BASIC behind.

All my BASIC programs have been lost to time. But that's alright. I really didn’t create anything worth saving, and they fulfilled their role as steppingstones along a path that eventually led to my career as a professional programmer. However, part of me still yearns for those simpler days when computers booted directly into BASIC. Its immediate accessibility, interactive environment, and forgiving nature fostered exploration and creativity. It greatly lowered the barrier for learning how to code. For me, and undoubtedly countless others who grew up in that era, BASIC sparked a lifelong passion for technology and computer science.

© 2023 meatfighter.com |