Java AI vs. NES AI

[ About | Videos | Download | Run | Artificial Intelligence | References ]

This article describes a Java AI plug-in for the Nintaco NES / Famicom emulator that automatically plays against the NES AI featured in Spot: The Video Game, the NES port of the board game Ataxx.

The Java AI destroys the NES AI in the video below:

The Java AI takes on the NES AI again, but this time the Spot animations were disabled:

Check out 2 Java AI's vs. 2 NES AI's:

In the following video, a single Java AI goes up against 2 NES AI opponents.

Watch the Java AI battle it out against 3 other NES AI opponents.

The .zip contains:

To watch it play faster, select Machine | Speed | Max.

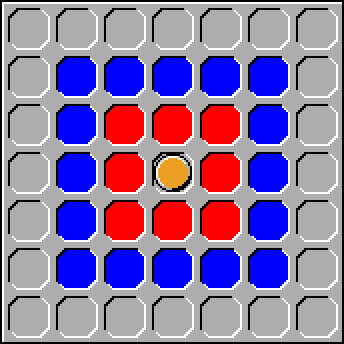

Spot is a board game played on a 7×7 grid. Two to four players take turns manipulating pieces of their designated color. A turn consists of moving a piece to an empty square located 1 or 2 squares away in any direction. If the destination is an adjacent square, shown red in the image below, then a new piece is introduced at that location. If the destination is two squares away, shown blue in the image below, then the piece jumps to that location. In both cases, all opponent pieces positioned in a square adjacent to the destination are converted to the player's color.

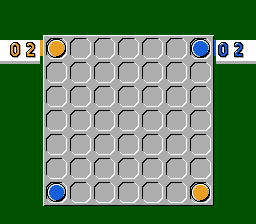

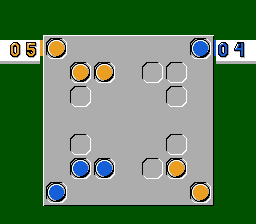

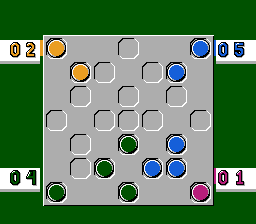

The images below depict the initial configurations for 2, 3 and 4 players, respectively. In the case of three, the third player can start in either lower corner.

On a turn, if a player is out of pieces or if none of his pieces can reach an empty square, then play is automatically passed to the next player. Play continues until every square is populated or only one player has pieces remaining. At that time, the player with the majority wins. Though, if more than one player share the majority, then it's a draw.

Before the game begins, the players can choose from among 512 built-in board layouts, each patterned with inaccessible squares. Alternatively, the players can actually create their own custom layout; the result does not need to be 4-way symmetrical, like the built-in ones, but the editor inhibits certain squares from being marked as inaccessible to guarantee that the layout is at least somewhat playable.

By default, each player can think about a move for as long as is needed; however, for an extra challenge, players can individually assign themselves a time limit of 30, 20, 10 or 5 seconds. Exceeding the limit forfeits a turn, passing play to the next player.

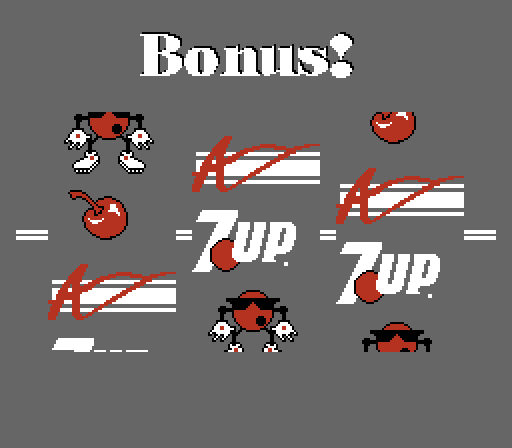

Just before the first move, the game clandestinely selects a random Secret Spot Square. Moving a piece to that location triggers a bonus game that resembles a slot machine (see below). Lining up three Spot mascots provides the player with an immediate extra turn. Three 7 Up logos yields seven extra seconds if the player's timer is enabled. Three cherries yields a cheat turn where the player may jump a piece to any free position on the entire board. Finally, three Arcadia Systems, Inc. logos allows the player to exchange all of his pieces with those of another player.

Refer to the Spot: The Video Game manual for further details.

Fully understanding how the NES AI operates would require a deep dive into its machine code, which has yet to happen. Even so, it is possible to conjecture based on behavioral observations. The NES AI responds nearly instantaneously, which suggests that it is not performing a recursive game tree search. Instead, it is likely just using an efficient heuristic function to rate its immediate moves, taking the one with the best rating.

The simplest option is to take the move that results in the maximum gain in pieces, the one that most increases the displayed scored value. And, given the limited capabilities of the NES hardware, it might actually be doing something that basic. But, in a typical game, the number of pieces owned by the players swings radically between turns. In fact, in the worst case scenario, 8 pieces are captured in a single turn; meaning, one player loses 8 while the other gains 8, an effective spread of 16, almost a third of the positions of the game board.

Reference [1] suggests a slightly more sophisticated way to rate the immediate moves. Starting from 0, it accumulates a sum using the following steps:

The sum favors moves that result in lots of captures and it prefers cloning over jumping, both of which contribute to the total number of pieces owned. But, it also rewards fortification, the amount of shielding around the source and destination squares. This causes the pieces to function more like a collective, protecting each other as the group advances as a whole.

The Java AI executes a game tree search tree, but it still relies on a heuristic function for intermediate nodes and that heuristic evaluates the entire game board position as opposed to moves. And, instead of producing a single value, the function rates each player individually, producing a list of values. For each player, starting from 0, it accumulates a sum using the following steps:

This heuristic takes into consideration the number of pieces, piece vulnerability and piece mobility. For vulnerability, any inaccessible adjacent positions, which includes the space outside the playfield and squares occupied by an opponent, act as form of shielding against attack. For mobility, it only examines jumps, treating the clone positions as possible attack points instead. And, the weights were assigned using intuition about the game and experimentation rather than machine learning techniques.

The Java AI uses an N-player extension of minimax that explicitly requires treating Spot as a zero-sum game, a game in which each player's gains or losses is exactly balanced by the losses or gains of the opponents. To better understand what this means, imagine that each player has a savings account in a common bank, each of which is filled with some arbitrary amount of money, and there is no outside source of additional funds. The initial amounts are actually irrelevant because a zero-sum game acting on the accounts would only track the movement of money between them. If the amount of money in one player's account increased, that means that the amount of money in one or more other players' accounts decreased. The cumulative changes can be expressed as a vector, a list of real numbers, one for each player that will be positive for a total gain and negative for a total loss. Since the vector only tracks the changes, as opposed to the absolute amounts in each account, the sum of the vector elements is always zero, hence the name of the game.

To conform to this idea, the vector produced by the game board position heuristic is adjusted such that the elements add up to 0. This is accomplished by treating each value as a gain. And, from the perspective of the opponents, that gain is a loss. The loss is divided up evenly among them and subtracted off. For instance, for 3 players, [A, B, C] is transformed into [A − B/2 − C/2, B − A/2 − C/2, C − A/2 − B/2], which sums up to zero.

Traditional 2-player minimax is also a zero-sum game even though it only requires an evaluation function that produces a single value representing the fitness of a position. That value, V, can be interpreted as the total gain/loss of the player that produced the position. And, if the total gain/loss of the player comes at the expense of the other, then the utility vector is always [V, −V]. This is actually the basis of the negamax algorithm, a simpler way to express minimax. However, since the only difference between the 2 elements is the sign of V, returning a vector is unnecessary. Yet, returning a scalar obscures the general nature of the game.

The move heuristic from reference [1] returns a value reflecting the differential gain/loss to the player making the move instead of the total positional gain/loss. Consequentially, the differential value would have to be added to an element of a utility vector maintaining running sums. More interestingly, when a player gains a point for each captured opponent piece, that point is subtracted off as a loss from a particular opponent as opposed to dividing the loss among all other opponents. Though, any other additional points gained would have to be distributed as such.

The Java AI takes advantage of an N-player extension of minimax and alpha-beta pruning called "hypermax". The full derivation, details and proofs related to the algorithm are found in reference [2]. Below is pseudocode of a variation that returns the best move to make along with the effective utility vector, the long-term consequences of making that move.

function (effectiveUtilVector, moveToMake) hypermax(node, alphaVector, player, depth)

if node type is win

return (winner utilVector, no move)

else if node type is draw or depth is MaxDepth

return (heuristic utilVector, no move)

(childNodes, movesThatCreatedChildNodes) := Sort(RandomlyShuffle(GenerateChildren(node)))

foreach child in childNodes, move in movesThatCreatedChildNodes

(utilVector, discarded) := hypermax(child, alphaVector, next player, depth + 1)

if first child

effectiveUtilVector := utilVector

moveToMake := move

if utilVector[player] > alphaVector[player]

alphaVector[player] := utilVector[player]

effectiveUtilVector := utilVector

moveToMake := move

if sum of all elements of alphaVector >= 0

break

return (effectiveUtilVector, moveToMake)

(* Initial call *) (discarded, moveToMake) := hypermax(currentGameState, [−∞, −∞, …, −∞], playerToMove, 0)

Hypermax accepts 4 parameters. node is the game state, a vertex of the game tree; at minimum, it's the placement of all the pieces on the game board, a start/mid/end-game configuration. alphaVector is the augmented version of α and β from alpha-beta pruning; each element of alphaVector is the maximum utility that a particular player is assured of; and, for the same reason that alpha-beta pruning stops when α ≥ β (or using a sign-adjusted β, when α + β ≥ 0), hypermax stops when the sum of the elements of alphaVector reaches or exceeds zero; if it reaches zero, then the player obtained the maximum utility remaining considering the amounts already guaranteed to the opponents, making any additional search pointless; if it exceeds 0, then the branch search revealed that the current player will ultimately steel away utility that one of the parent nodes already found a way to guarantee for himself, forcing the parent to avoid the branch completely and again making any additional search unnecessary. player is the player to move, the one that derives the child nodes from node. And, finally, depth is the current search depth, starting from 0 and incrementing to some MaxDepth.

The algorithm begins by checking if the node represents a win configuration. And, if that's the case, an exceedingly large gain will appear in the element of the returned utility vector corresponding to the winner; the losing opponents will be assigned large losses, balancing everything out to zero. If the node represents a draw configuration or if the maximum depth was reached, then the heuristic evaluation function is applied to estimate the utility vector. Win, draw and cutoff nodes are all treated as leaf nodes, those without any children, the player having no move to make.

If the node is not a leaf, then it generates a list of children along with a list of the moves used to produce them. Toward the end, it is possible that a player run out of legal positions. But, per the rules, this doesn't terminate the game. Instead, the list of child nodes will contain a single node identical to the parent node, since the player's position didn't change, and the moves list will contain a single operation: pass to next player.

The returned child list is randomly shuffled and then it is sorted by the element of the utility vectors corresponding to the player. Ordering the children via the heuristic function searches the branches likely to force alphaVector cutoffs early; the hope is that a high short-term gain, such as capturing a lot of opponent pieces, will actually correspond to a high long-term gain. It is possible that some of the child utility vectors will contain equal values, especially early on in the game. This is why they are randomly shuffled before sorting; it prevents the AI from always responding in the same way to a given position and it also prevents branches of equal estimated value from being inspected in some biased order that may actually slow down the search. childNodes and movesThatCreatedChildNodes is shuffled and sorted exactly the same way; the elements still pair up properly afterwards.

Next, for each child node and the move that produced it, hypermax is recursively called to obtain the node's utility vector; the returned move is discarded. The utility vector may be an approximation produced by the heuristic function or it may be an actual known result, a winner utility vector. In either case, they are selected vectors by the procedure about to be discussed. It should also be noted that when alphaVector is passed into hypermax, it is deep copied, as opposed to passing in a pointer to a common object; alphaVector is modified by hypermax and the changes only propagate to forward.

alphaVector is a lower bound, each element representing the maximum utility that a particular player discovered thus far. Note that in the initial call, all of the elements of alphaVector are set to −∞, the absolute minimum bound. The loop updates alphaVector when more utility for the player was found. And, it simultaneously records the associated utility vector and the move that maximized the utility. The loop exits early if the elements of alphaVector reach or exceed zero as discussed above.

The NES AI does not respond identically when provided with the same game board position. As discussed above in the context of the Java AI, when the heuristic function equally values a best subset of the immediate moves, the machine should randomly pick among them to avoid bias and to make gameplay more interesting. In addition, the NES AI appears to make moves that advance its pieces toward opponent pieces, suggesting that the heuristic favors aggressiveness. It also tends to creep along the game board edges perhaps for the shielding advantage.

The NES AI provides 5 levels of difficulty. For a tree search, the simplest way to increase difficulty is by deepening the search. Under that approach, quite unfortunately, the response time increases exponentially with difficulty. But, there is an expectation that the machine should be allowed think longer when set to higher levels for the same reason that humans are allowed to do so. Nonetheless, the NES AI appears to respond almost instantaneously regardless of the difficulty setting. This suggests that instead of searching deeper on higher levels its probably just handicapping itself on lower levels, perhaps by randomly selecting moves identified as suboptimal. Or, it could be doing a mix of things such as using a slightly better heuristic technique at higher difficulties while at the same time crippling itself on lower ones.

The Java AI sets the hypermax cutoff depth to the number of players plus one. This enables it to examine the consequences of everyone taking a single turn followed by the AI taking an extra one. The idea is that if the NES AI is only inspecting the immediate move, the Java AI will strive for 2. Unfortunately, due to the high branching factor of the game tree, increasing the depth much further is not that practical. But, as demonstrated by the videos, this cutoff setting is sufficient to beat the NES AI, including multiple instances of it.

Unlike a real slot machine, by using skilled timing, the player can direct the Bonus Game to any desired outcome with perfect accuracy. Consequentially, if the Java AI happens to land on a bonus game square, it calls hypermax with an additional parameter indicating that the child nodes should include a jump of one of its pieces to any free square on the board (3 matching cherries), swapping all pieces with those of another player (3 matching Arcadia Systems logos), and the standard clone and jump moves (3 matching Spots). The extra parameter only applies to the immediate children; the subsequence moves are the standard ones. The returned move-to-make includes the required Bonus Game outcome and the Java AI plays it accordingly. Subsequently, it executes the acquired bonus move.

The Java AI plug-in uses the Nintaco API to manipulate CPU Memory and to receive frame rendered events. All memory addresses were discovered through exploration with the Nintaco Debugging Tools and the information has been added to the Data Crystal ROMhacking.net wiki. In the source, they appear as constants within the Addresses interface.